- Blog

- About

- Contact

- Autocad trial version 2017

- Newgrounds road of the dead 2

- Akhiyon ke jharokon se download

- Smart card reader walmart

- Gran turismo 7 pc

- Fnaf song creator

- What is the one minute cure

- Pdp wired controller for xbox one - pc camo

- Cacheman windows 10

- Fanaa movie with english subtitles

- Braina pro with crack

- Katrina johnson all that nickelodeon

- Movavi video converter 17 -

- Batman vs superman ultimate edition blu ray torrent

- U he zebra 2 serial blog

- Reimage repair online review

Luckily for us, computing doesn’t stand still. My guess is that we have another decade or two to squeeze more gains out of our current chip technology and computer architecture. Some day John von Neumann’s architecture of processor and memory, first described in 1945, will no longer meet our computing needs. Someday Gordon Moore’s Law from 1965-the number of transistors per chip doubles about every two years-will poop out. Graphics processing units, often used for artificial intelligence and bitcoin mining, can have thousands of processor cores. So 50 years later, have we reached the limit? For the past 20 years, microprocessors have boosted performance by adding more computing cores per chip. Oil? Good luck finding and drilling it without smart machines. All of retail-ask Walmart about its inventory system. I’m convinced that most wealth created since 1971 is a direct result of the 4004.

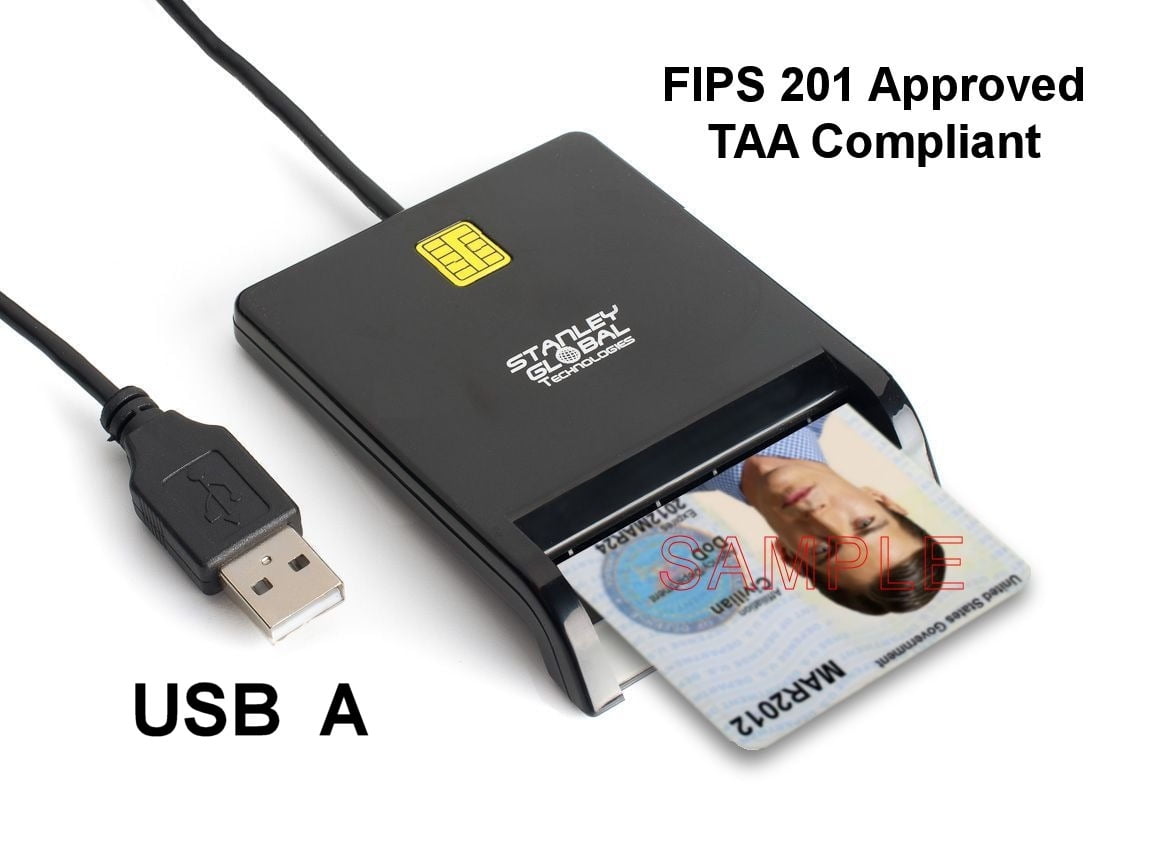

SMART CARD READER WALMART SOFTWARE

Apple can design in California, manufacture anywhere, and add its software at any point in the manufacturing process. It enables global supply chains, for good or bad (mostly good). The separation of hardware and software, of design and control, is underappreciated.

It also has created an infinitely updatable world-bugs get fixed and new features are rolled out without a change in hardware. Mobile computing paved the way for smartphones, robotic vacuum cleaners, autonomous vehicles, moisture sensors for crops-even GPS tracking for the migratory patterns of birds. Today’s automobiles often need 50 or more microprocessors to drive down the road, although with the current chip shortage, many are sitting on lots waiting for chips. These days, everyone’s aunt has at least one. They get smaller, faster, cheaper and use less power every year as they spread like Johnny’s apple seeds through society.

SMART CARD READER WALMART CODE

Silicon processors are little engines of human ingenuity that run clever code scaled to billions of devices. In “Take the Money and Run" (1969), Woody Allen’s character interviews for a job at an insurance company and his interviewer asks, “Have you ever had any experience running a high-speed digital electronic computer?" “Yes, I have." “Where?" “My aunt has one."

SMART CARD READER WALMART MOVIE

Now that everyone has a computer in his pocket, one of my favorite movie scenes isn’t quite so funny. The repairman then asked why he was laughing. Hoff in the 1980s, he told me that he once took his broken television to a repairman, who noted a problem with the microprocessor. That is at least a billionfold increase in computer power in 50 years. By comparison, Apple’s new M1 Max processor has 57 billion transistors doing 10.4 trillion floating-point operations a second. IBM used the next iteration, the Intel 8088, for its first personal computer. Intel introduced the 3,500-transistor, eight-bit 8008 in 1972 the 29,000-transistor, 16-bit 8086, capable of 710,000 operations a second, was introduced in 1978. The half-inch-long rectangular integrated circuit had a clock speed of 750 kilohertz and could do about 92,000 operations a second. Four bits of data could move around the chip at a time. Using only 2,300 transistors, they created the 4004 microprocessor.

Engineers Federico Faggin, Stanley Mazor and Ted Hoff were tired of designing different chips for various companies and suggested instead four chips, including one programmable chip they could use for many products. asked Intel to design 12 custom chips for a new printing calculator. In 1969, Nippon Calculating Machine Corp. sold PDP-8 minicomputers to labs and offices that weighed 250 pounds. Feeding decks of punch cards into a reader and typing simple commands into clunky Teletype machines were the only ways to interact with the IBM computers. Workers were told to evacuate on short notice, before the gas would suffocate them. Back then, IBM mainframes were kept in sealed rooms and were so expensive companies used argon gas instead of water to put out computer-room fires.